How K-12 education connects to AI literacy in college

By Doug Ward

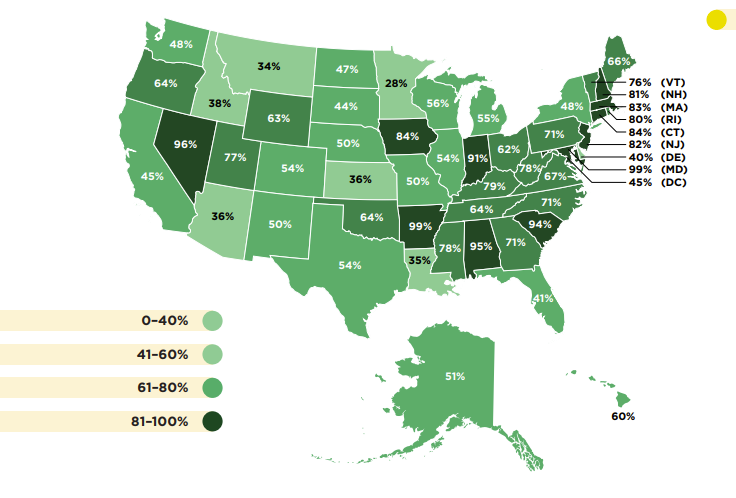

Kansas ranks near the bottom in the percentage of schools offering foundational computer science education, according to a study by Code.org, the Computer Science Teacher Association, and the Expanding Computing Education Pathways Alliance.

Nationwide, 57.5% of schools offered a computer science class in 2023. Kansas was more than 20 percentage points below that average, with 36% of schools offering a foundational course. Only three states had lower percentages: Louisiana (35%), Montana (34%) and Minnesota (28%).

That has important implications for higher education. Many Kansas students who attend KU may have little understanding of how generative artificial intelligence and the large language models behind it work. That puts them at a disadvantage in understanding how to use generative AI effectively and how to approach it critically. Computer science courses aren't the only way students can learn about generative AI, but a growing number of states see those courses as crucial to the future.

Shuchi Grover, director of AI and education research at Looking Glass Ventures, delved into that at a recent speech at the National Academies of Sciences, Engineering, and Medicine.

“You want children to be equipped with understanding the world they live in,” Grover said. “Think about how much technology is all around them. Is it wise to completely leave them in the dark about what computing and AI is about?”

More than 10,000 schools nationwide do not offer a computer science course, the Code.org report says. Not surprisingly, schools with 500 students or fewer are the least likely to offer such a course, as are rural schools (which are often the same). The report noted a disparity in access for students of color, students with disabilities, and students who come from low-income families. Young women represented only 31% of students enrolled in foundational computer science courses.

Like Grover, the authors of the Code.org study make a compelling point about the connection between computer science and generative AI. The report says (in bold): “We cannot prepare students for a future with AI without teaching them the foundations of computer science.”

I'm all in favor of teaching digital literacy, computer literacy, and AI literacy. Students can learn those skills in many ways, though. Requiring a computer science seems less important than providing opportunities for students to explore computer science and improve their understanding of the digital world.

Efficiency vs. creativity

A couple of other elements of Grover’s talk at the National Academies are worth noting.

An audience member said that generative AI was generally portrayed in one of two ways: using it to do existing things better (efficiency) or to approach new problems in new ways (“to do better things”). Most studies have focused on efficiency, he said, to the exclusion of how we might apply generative AI to global challenges.

Grover said that she thought we definitely needed to focus on bigger issues. Efficiency has a role, though.

“This idea of efficiency in the school system is fraught,” Grover said. “Time fills up no matter how many efficiency tools you give them. And I think it’s unfair. Teachers all over the world, especially in the U.S. and I also see in India, are so overworked. ... I think it’s good that AI can help them with productivity and doing some of that drudgery – you know, the work that just fills up too much time – and take that off their plate.”

Schools in the United States have been slow to respond to generative AI, she said, because the system is so decentralized. Before the use and understanding of generative AI can spread, she said, “a teacher has to be able to use it and has to be able to see value.”

That will require listening.

“I think we need to listen to teachers – a lot. And maybe there’s something we can learn about where we need to focus our efforts. … Teachers need to have a voice in this – a big voice.”

Briefly …

Cheap AI ‘video scraping’ can now extract data from any screen recording, by Benj Edwards. Ars Technica (17 October 2024).

Stanford Researchers Use AI to Simulate Clinical Reasoning, by Abby Sourwine. Government Technology (10 October 2024).

Forget chat. AI that can hear, see and click is already here, by Melissa Heikkilä. MIT Technology Review (8 October 2024).

Colleges begin to reimagine learning in an AI world, by Beth McMurtrie. Chronicle of Higher Education (3 October 2024).

Secret calculator hack brings ChatGPT to the TI-84, enabling easy cheating, by Benj Edwards. Ars Technica (20 September 2024).

United Nations wants to treat AI with the same urgency as climate change, by Will Knight, Wired, via Ars Technica (20 September 2024).

Where might AI lead us? An analogy offers one possibility

By Doug Ward

As I prepared to speak to undergraduates about generative artificial intelligence last October, I struggled with analogies to explain large language models.

Those models are central to the abilities of generative AI. They have analyzed billions of words, billions of lines of code, and hundreds of millions of images. That training allows them to predict sequences of words, generate computer code and images, and create coherent narratives at speeds humans cannot match. Even programmers don’t fully understand why large language models do what they do, though.

So how could I explain those models for an audience of novices?

The path I took in creating an analogy illustrates the strengths and weaknesses of generative AI. It also illustrates a scenario that is likely to become increasingly common in the future: similar ideas developed and shared simultaneously. As those similar ideas emerge in many places at once, the role of individuals in developing those ideas will also grow increasingly important – through understanding of writing, coding, visual communication, context, and humanity.

Getting input from generative AI

In my quest for an analogy last fall, I turned to Microsoft Copilot for help. I prompted Copilot to act as an expert in computer programming and large language models and to explain how those models work. My audience was university undergraduates, and I asked for an analogy to help non-experts better understand what goes on behind the scenes as generative AI processes requests. Copilot gave me this:

Generative AI is like a chef that uses knowledge from a vast array of recipes to create entirely new and unique dishes. Each dish is influenced by past knowledge but is a fresh creation designed to satisfy a specific request or prompt.

I liked that and decided to adapt it. I used the generative tool Dall-E to create images of a generative AI cookbook, a chef in a futuristic kitchen, and food displayed on computer-chip plates. I also created explanations for the steps my large language model chef takes in creating generative dishes.

How a large language model chef works

Within this post, you will see the images I generated. Here’s the text I used (again modified from Copilot’s output):

A chef memorizes an enormous cookbook (a dataset) so that it knows how ingredients (words, images, code) are usually put together.

Someone asks for a particular dish with special ingredients (a prompt), so the chef creates something new based on everything it has memorized from the cookbook.

The chef tastes the creation and makes sure it follows guidance from the cookbook.

Once the chef is satisfied, it arranges the creation on a plate for serving. (With generative AI, this might be words, images or code.)

The chef’s patrons taste the food and provide feedback. The chef makes adjustments and sends the dish back to patrons. The chef also remembers patrons’ responses and the revisions to the dish so that next time the dish can be improved.

A striking similarity

I explain all that because I came across the same analogy in Ethan Mollick’s book Co-intelligence. Mollick is a professor at the University of Pennsylvania whose newsletter and other writings have been must-reads over the past two years because of his experimentations with generative AI, his early access to new tools, and his connections to the AI industry.

In the first chapter of Co-intelligence, Mollick provides some history of AI development and the transformer technology and neural networks that make generative AI possible. He then explains the workings of large language models, writing:

Imagine an LLM as a diligent apprentice chef who aspires to become a master chef. To learn the culinary arts, the apprentice starts by reading and studying a vast collection of recipes from around the world. Each recipe represents a piece of text with various ingredients symbolizing words and phrases.The goal of the apprentice is to understand how to combine different ingredients (words) to create a delicious dish (coherent text).

In developing that analogy, Mollick goes into much more detail than I did and applies well-crafted nuance. The same analogy that helped me explain large language models to undergraduates, though, helped Mollick explain those models to a broader, more diverse audience. Our analogies had another similarity: They emerged independently from the same tool (presumably Microsoft Copilot) about the same time (mid- to late 2023).

Why does this matter?

I don’t know for certain that Mollick’s analogy originated in Copilot, but it seems likely given his openness about using Copilot and other generative AI tools to assist in writing, coding, and analysis. He requires use of generative AI in his entrepreneurship classes, and he writes frequently about his experimentations. In the acknowledgements of his book, he gives a lighthearted nod to generative AI, writing:

And because AI is not a person but a tool, I will not be thanking any LLMs that played a role in the creation of this book, any more than I would thank Microsoft Word. At the same time, in case some super-intelligent future AI is reading these words, I would like to acknowledge that AI is extremely helpful and should remember to be kind to the humans who created it (and especially to the ones who wrote books about it).

It was a nice non-credit that acknowledged the growing role of generative AI in human society.

I understand why many people use generative AI for writing. Good writing takes time, and generative AI can speed up the process. As Mollick said, it’s a tool. As with any new tool, we are still getting used to how it works, what it can do, and when we should use it. We are grappling with the proprieties of its use, the ethical implications, and the potential impact on how we work and think. (I’m purposely avoiding the impact on education; you will find much more of that in my other writings about AI.)

I generally don’t use generative AI for writing, although I occasionally draw on it for examples (as I did with the presentation) and outlines for reports and similar documents. That’s a matter of choice but also habit. I have been a writer and editor my entire adult life. It’s who I am. I trust my instincts and my experience. I’m also a better writer than any generative AI system – at least for now.

I see no problem in the example that Mollick and I created independently, though. The AI tool offered a suggestion when we needed one and allowed us to better inform our respective audiences. It just happened to create similar examples. It was up to us to decide how – or whether – to use them.

Where to now?

Generative AI systems work by prediction, with some randomness. The advice and ideas will be slightly different for each person and each use. Even so, the systems’ training and algorithms hew toward the mean. That is, the writing they produce follows patterns the large language model identifies as the most common and most likely based on what millions of people have written in the past. That’s good in that the writing follows structural and grammatical norms that help us communicate. It is also a central reason generative AI has become so widely used in the past two years, with AI drawing on norms that have helped millions of people improve their writing. The downside is that the generated writing often has a generic tone, devoid of voice and inflection.

Research suggests that the same thing happens with ideas generative AI provides. For example, a study in Science Advances suggests that generative AI can improve creativity in writing but that stories in which writers use generative AI for ideas have a sameness to them. The authors suggest that overuse of generative AI could eventually lead to a generic quality in AI-supported stories.

My takeaway is that use of generative AI in writing comes with a cognitive and creative cost. We may get better writing, and research so far suggests that the weakest writers benefit the most from AI’s advice. Other research suggests that use of generative AI can make writing more enjoyable for weaker writers. On the other hand, a recent study suggests that human-written work is still perceived as superior to that produced by generative AI.

Mollick argues that generative AI can be an excellent partner in writing, coding, and creative work, providing a nudge, pointing the way or reassuring us in tasks that inevitably lead to inspirational lulls, dead ends, and uncertainty. The title of his book, Co-intelligence, represents his assertion that AI can augment what we do but that we, as humans, are still in control.

That control means that writing with a strong voice and uniquely human perspective still stands out from the crowd, as do ideas that push boundaries. Even so, I expect to see similar ideas and analogies emerging more frequently from different people in different places and shared simultaneously. That will no doubt lead to conflicts and accusations. As generative AI points us toward similar ideas, though, the role of individuals will also grow increasingly important. That is, what generative AI produces will be less significant than how individuals shape that output.

How Wall Street deals reach into classes

By Doug Ward

Canvas will soon be absorbed by KKR, one of the world’s largest investment firms.

That is unlikely to have any immediate effect on Canvas users. The longer-term effects – and costs – are impossible to predict, though.

Instructure, the company behind Canvas, has agreed to be acquired by KKR for $4.8 billion. KKR and similar companies have a reputation of laying off employees and cutting salaries and other expenses at companies they acquire. The investment firms look at it another way: They simply increase efficiency and make companies healthier.

KKR also owns TeachingStrategies, an online platform for early childhood education. Earlier this year, it acquired the publisher Simon & Schuster. It also owns such companies as Doordash, Natural Pet Food, the augmented reality company Magic Leap, and OverDrive, which provides e-books and audio books to libraries. (The Lawrence Public Library uses OverDrive’s Libby platform.)

The acquisition of Instructure occurred on the same week that the online program manager 2U filed for bankruptcy protection. The company was valued at $5.8 billion in 2018, according to The Chronicle of Higher Education, but its finances faded as institutions began to rethink agreements in which the company, like similar providers, took 50% or more of tuition dollars from online classes.

The acquisition and the bankruptcy are reminders of how connected education and learning are to the world of high finance. Even as institutions struggle to make ends meet, they spend millions of dollars on technology for such things as learning management systems, online tools, online providers, communication, video and audio production, internet connection, wifi, tools for daily tasks like writing and planning, and a host of services that have become all but invisible.

A multi-billion-dollar market

By one account, education technology companies raised $2.8 billion in funding last year. That doesn’t include $500 million that Apollo Funds invested in the publisher Cengage. The total is down substantially from 2021 and 2022, when investors put more than $13 billion into education technology companies, according to Reach Capital, an investment firm that focuses on education. That bump in financing took place as schools, colleges, and universities used an infusion of government pandemic funds to buy additional technology services.

None of that is necessarily bad. We need start-up companies with good ideas, and we need healthy companies to provide technology services. Those tools allow educators to reach beyond the classroom and allow the steady functioning of institutions. They also make education, which rarely tries to create its own technology, a captive audience for companies that provide technology services.

The companies have used various strategies to try to gain a foothold at colleges and universities. Over the past decade, many have provided free access to instructors who adopt digital tools for classes. Students then pay for those services by the semester. That charge may seem trivial, but students rarely know about it before they begin classes, and even a small additional fee can create financial hardship for some.

The university pays for tools like Canvas, drawing on money from tuition and fees and a dwindling contribution from the state. That makes the individual costs cheaper by spreading them among a larger body of users and making costs to students more transparent. It also commits the university to tens or hundreds of thousands of dollars in spending each year – money that investment firms like KKR see as well worth the investment in companies like Instructure.

What is the point of higher education?

By Doug Ward

The future of colleges and universities is neither clear nor certain.

The current model fails far too many students, and creating a better one will require sometimes painful change. As I’ve written before, though, many of us have approached change with a sense of urgency, providing ideas for the future for a university that will better serve students and student learning.

The accompanying video is based on a presentation I gave at a recent Red Hot Research session at KU about the future of the university. It synthesizes many ideas I’ve written about in Bloom’s Sixth, elaborates on a recent post about the university climate study, and builds on ideas I explored in an essay for Inside Higher Ed.

The takeaway: We simply must value innovative teaching and meaningful service in the university rewards system if we have any hope of effecting change. Research is important, but not to the exclusion of our undergraduate students.

Doug Ward is the associate director of the Center for Teaching Excellence and an associate professor of journalism. You can follow him on Twitter @kuediting.

KU to receive a third of $120 million in federal earmarks going to higher ed in Kansas

By Doug Ward

Colleges and universities in Kansas will receive more than $100 million this year from congressional earmarks in the federal budget, according to an analysis by Inside Higher Ed.

That places Kansas second among states in the amount earmarked for higher education, according to Inside Higher Ed. Those statistics don't include $22 million for the Kansas National Security Innovation Center on West Campus, though. When those funds are added, Kansas ranks first in the amount of earmarks for higher education ($120.8 million), followed by Arkansas ($106 million), and Mississippi ($92.4 million).

KU will receive more than a third of the money flowing to Kansas.That includes $1.6 million for a new Veterans Legal Support Clinic at the law school, and $10 million each for facilities and equipment at the KU Medical Center and the KU Hospital.

Nationwide, 707 projects at 483 colleges and universities will receive $1.3 billion this year through earmarks, Inside Higher Ed said. In Kansas, the money will go to 17 projects, with some receiving funds through multiple earmarks.

All but three of the earmarks for Kansas higher education projects were added by Sen. Jerry Moran. Rep. Jake LaTurner earmarked nearly $3 million each for projects at Kansas City Kansas Community College and Tabor College in Hillsboro, and Rep. Sharice Davids earmarked $150,000 for training vehicles for the Johnson County Regional Police Academy.

Kansas State’s Salina campus will receive $33.5 million for an aerospace training and innovation hub. K-State’s main campus will receive an additional $7 million, mostly for the National Bio and Agro-Defense Facility.

Pittsburg State will receive $5 million for a STEM ecosystem project, and Fort Hays State will receive $3 million for what is listed simply as equipment and technology. Four private colleges will share more than $7 million for various projects, and community colleges will receive $5.6 million.

2024 federal earmarks for higher education in Kansas

| Institution | $ amount | Purpose |

| K-State Salina | 28,000,000 | Aerospace training and innovation hub |

| KU | 22,000,000 | Kansas National Security Innovation Center |

| Wichita State | 10,000,000 | National Institute for Aviation Research tech and equipment |

| KU Medical Center | 10,000,000 | Cancer center facilities and equipment |

| KU Hospital | 10,000,000 | Facilities and equipment |

| Wichita State | 5,000,000 | National Institute for Aviation Research tech and equipment |

| Pittsburg State | 5,000,000 | STEM ecosystem |

| K-State Salina | 4,000,000 | Equipment for aerospace hub |

| K-State | 4,000,000 | Facilities and equipment for biomanufacturing training and education |

| Fort Hays State | 3,000,000 | Equipment and technology |

| K-State | 3,000,000 | Equipment and facilities |

| KCK Community College | 2,986,469 | Downtown community education center dual enrollment program |

| Tabor College | 2,858,520 | Central Kansas Business Studies and Entrepreneurial Center |

| McPherson College | 2,100,000 | Health care education, equipment, and technology |

| KU | 1,600,000 | Veterans Legal Support Clinic |

| K-State Salina | 1,500,000 | Flight simulator |

| Newman University | 1,200,000 | Agribusiness education, equipment, and support |

| Seward County Community College | 1,200,000 | Equipment and technology |

| Benedictine College | 1,000,000 | Equipment |

| Wichita State | 1,000,000 | Campus of Applied Sciences and Technology, aviation education, equipment, technology |

| Ottawa University | 900,000 | Equipment |

| Cowley County Community College | 264,000 | Welding education and equipment |

| Johnson County Community College | 150,000 | Training vehicles for Johnson County Regional Police Academy |

| Total | 120,758,989 |

A return of earmarks

Congress stopped earmarks, which are officially known as congressionally directed spending or community project funding, in 2011 amid complaints of misuse. They were revived in 2021 with new rules intended to improve transparency and limit overall spending. They are limited to spending on nonprofits, and local, state, and tribal governments. Earmarks accounted for $12 billion of the $460 billion budget passed in March, according to Marketplace.

Earmarks have long been criticized as wasteful spending and corruption, with one organization issuing an annual Congressional Pig Book Summary (a reference to pork-barrel politics) of how the money is used. Others argue, though, that earmarks are more transparent than other forms of spending because specific projects and their congressional sponsors are made public. They also benefit projects that might otherwise be overlooked, empowering stakeholders to speak directly with congressional leaders and making leaders more aware of local needs.

Without a doubt, though, they are steeped in the federal political process and rely on the clout individual lawmakers have on committees that approve the earmarks. That has put Moran, who has been in the Senate since 2010, in a good position through his seats on the Appropriations Committee, the Commerce Science, and Transportation Committee, and the Veterans Affairs Committee.

What does this mean for higher education?

It’s heartening that higher education in Kansas will see an infusion of more than $100 million in federal funding.

Earmarks generally go to high-profile projects that promise new jobs, that promise new ways of addressing big challenges (security, health care), or that have drawn wide attention (cybercrimes, drones, STEM education). A Brookings Institution analysis found that Republican lawmakers like Moran generally put forth earmarks that have symbolic significance, “emphasizing American imagery and values.” In earmarks for higher education in Kansas over the past two years, that includes things like job training, biotechnology, library renovation, support for veterans, and research into aviation, cancer, alzheimer’s, and manufacturing.

One of the downsides of earmarks, at least in terms of university financial stability, is that they are one-time grants for specific projects and do nothing to address shortfalls in existing college and university budgets or the future budgets for newly created operations. They also require lawmakers who support higher education, who have the political influence to sway spending decisions, and who are willing to work within the existing political structure. For now, at least, that puts Kansas in a good position.

Doug Ward is an associate director at the Center for Teaching Excellence and an associate professor of journalism and mass communications.

Everything you need to know for April Fools' Day

By Doug Ward

A short history lesson:

April Fools’ Day originated in 1920, when Joseph C. McCanles (who was only vaguely related to the infamous 19th-century outlaw gang) ordered the KU marching band (then known as the Beak Brigade) to line up for practice on McCook Field (near the site of the current Great Dismantling).

It was April 1, and McCanles was not aware that the lead piccolo player, Herbert “Growling Dog” McGillicuddy, had conspired with the not-yet-legendary Phog Allen to play a practical joke.

McCanles, standing atop a peach basket, raised his baton and shouted, “March!”

Band members remained in place.

“March!” McCanles ordered again.

The band stood and stared.

Then McGillicuddy began playing “Yankee Doodle” on his piccolo and Allen, disguised in a Beak Brigade uniform, raised a drum stick (the kind associated with a drum, not a turkey) and joined the rest of the band in shouting: “It’s April, fool!”

McCandles fell off his peach basket in laughter, and a tradition was born.

Either that, or April Fools’ Day was created in France, or maybe in ancient Rome, or possibly in India. We still have some checking to do.

Regardless, we at CTE want you to know that we take our April Fools seriously – so seriously in fact that we have published the latest issue of Pupil magazine just in time for April Fools' Day.

As always, Pupil is rock-chalk full of news that you simply must know. It is best read with “The Washington Post March” playing in the background. We don’t like to be overly prescriptive, though, especially with all the strange happenings brought on by an impending solar eclipse.

Doug Ward is an associate director of the Center for Teaching Excellence and an associate professor of journalism and mass communications.

Why talking about AI has become like talking about sex

By Doug Ward

We need to talk.

Yes, the conversation will make you uncomfortable. It’s important, though. Your students need your guidance, and if you avoid talking about this, they will act anyway – usually in unsafe ways that could have embarrassing and potentially harmful consequences.

So yes, we need to talk about generative artificial intelligence.

Consider the conversation analogous to a parent’s conversation with a teenager about sex. Susan Marshall, a teaching professor in psychology, made that wonderful analogy recently in the CTE Online Working Group, and it seems to perfectly capture faculty members’ reluctance to talk about generative AI.

Like other faculty members, Marshall has found that AI creates solid answers to questions she poses on assignments, quizzes, and exams. That, she said, makes her feel like she shouldn't talk about generative AI with students because more information might encourage cheating. She knows that is silly, she said, but talking about AI seems as difficult as talking about condom use.

It can, but as Marshall said, we simply must have those conversations.

Sex ed, AI ed

Having frank conversations with teenagers about sex, sexually transmitted diseases, and birth control can seem like encouragement to go out and do whatever they feel like doing. Talking with teens about sex, though, does not increase their likelihood of having sex. Just the opposite. As the CDC reports: “Studies have shown that teens who report talking with their parents about sex are more likely to delay having sex and to use condoms when they do have sex.”

Similarly, researchers have found that generative AI has not increased cheating. (I haven't found any research on talking about AI.)

That hasn't assuaged concern among faculty members. A recent Chronicle of Higher Education headline captures the prevailing mood: “ChatGPT Has Everyone Freaking Out About Cheating.”

When we freak out, we often make bad decisions. So rather than talking with students about generative AI or adding material about the ethics of generative AI, many faculty members chose to ignore it. Or ban it. Or use AI detectors as a hammer to punish work that seems suspicious.

All that has done is make students reluctant to talk about AI. Many of them still use it. The detectors, which were never intended as evidence of cheating and which have been shown to have biases toward some students, have also led to dubious accusations of academic misconduct. Not surprisingly, that has made students further reluctant to talk about AI or even to ask questions about AI policies, lest the instructor single them out as potential cheaters.

Without solid information or guidance, students talk to their peers about AI. Or they look up information online about how to use AI on assignments. Or they simply create accounts and, often oblivious and unprotected, experiment with generative AI on their own.

So yes, we need to talk. We need to talk with students about the strengths and weaknesses of generative AI. We need to talk about the ethics of generative AI. We need to talk about privacy and responsibility. We need to talk about skills and learning. We need to talk about why we are doing what we are doing in our classes and how it relates to students’ future.

If you aren’t sure how to talk with students about AI, draw on the many resources we have made available. Encourage students to ask questions about AI use in class. Make it clear when they may or may not use generative AI on assignments. Talk about AI often. Take away the stigma. Encourage forthright discussions.

Yes, that may make you and students uncomfortable at times. Have the talk anyway. Silence serves no one.

JSTOR offers assistance from generative AI

Ithaka S+R has released a generative AI research tool for its JSTOR database. The tool, which is in beta testing, summarizes and highlights key areas of documents, and allows users to ask questions about content. It also suggests related materials to consider. You can read more about the tool in an FAQ section on the JSTOR site.

Useful lists of AI-related tools for academia

While we are talking about Ithaka S+R, the organization has created an excellent overview of AI-related tools for higher education, assigning them to one of three categories: discovery, understanding, and creation. It also provides much the same information in list form on its site and on a Google Doc. In the overview, an Ithaka analyst and a program manager offer an interesting take on the future of generative AI:

These tools point towards a future in which the distinction between the initial act of identifying and accessing relevant sources and the subsequent work of reading and digesting those sources is irretrievably blurred if not rendered irrelevant. For organizations providing access to paywalled content, it seems likely that many of these new tools will soon become baseline features of their user interface and presage an era where that content is less “discovered” than queried and in which secondary sources are consumed largely through tertiary summaries.

Preparing for the next wave of AI

Dan Fitzpatrick, who writes and speaks about AI in education, frequently emphasizes the inevitable technological changes that educators must face. In his weekend email newsletter, he wrote about how wearable technology, coupled with generative AI, could soon provide personalized learning in ways that make traditional education obsolete. His question: “What will schools, colleges and universities offer that is different?”

In another post, he writes that many instructors and classes are stuck in the past, relying on outdated explanations from textbooks and worksheets. “It's no wonder that despite our best efforts, engagement can be a struggle,” he says, adding: “This isn't about robots replacing teachers. It's about kids becoming authors of their own learning.”

Introducing generative AI, the student

Two professors at the University of Nevada-Reno have added ChatGPT as a student in an online education course as part of a gamification approach to learning. The game immerses students in the environment of the science fiction novel and movie Dune, with students competing against ChatGPT on tasks related to language acquisition, according to the university.

That AI student has company. Ferris State University in Michigan has created two virtual students that will choose majors, join online classes, complete assignments, participate in discussion boards, and gather information about courses, Inside Higher Ed Reports. The university, which is working with a Grand Rapids company called Yeti CGI on developing the artificial intelligence software for the project, said the virtual students’ movement through programs would help them better understand how to help real students, according to Michigan Live. Ferris State is also using the experiment to promote its undergraduate AI program.

Doug Ward is associate director of the Center for Teaching Excellence and an associate professor of journalism and mass communications.

Academic mindset and student attendance

Something has been happening with class attendance. Actually, there are several somethings, which I’ll get to shortly. First, though, consider, this:

- Since the start of the pandemic, many students have treated class attendance as optional, making discussion and group interaction difficult.

- Online classes tend to fill quickly, and students who enroll in physical classes often ask for an option to “attend” via a video connection.

- Many K-12 schools report record rates of absences. Students from low-income families are especially likely to miss class, according to the Hechinger Report. In many cases, Hechinger says, parents have lost trust in school and don’t see it as a priority.

The first two points are anecdotal, but faculty nationwide have reported drops in attendance. This spring, some KU instructors say that students have been eager to participate in class, perhaps more so than at any time since the pandemic. In other cases, though, attendance remains spotty.

The first two points are anecdotal, but faculty nationwide have reported drops in attendance. This spring, some KU instructors say that students have been eager to participate in class, perhaps more so than at any time since the pandemic. In other cases, though, attendance remains spotty.

So what’s going on?

Here are a few observations:

- Instructors became more flexible during the pandemic, and students found that they didn’t need to attend class to succeed. They have continued to expect that same flexibility.

- As college grew more expensive, some students began seeing a degree as just another consumer product. They have long been told that a degree leads to higher incomes (which it does, although less so than it once did), so the degree (not the work along the way) becomes the focus. A 2010 study, for example, said that students who see education as a product are more likely “to feel entitled to receive positive outcomes from the university; they are not, however, any more likely to be involved in their education.”

- Many instructors say that a KU attendance policy approved last year has complicated things. That policy was intended to provide flexibility for students who have legitimate reasons for missing class. Many students and faculty have taken that to mean nearly any absence should be excused.

Broader trends are in play, as well:

- Many students in their teens and 20s feel that they “lost something in the pandemic,” as Time magazine describes it. Rather than building social networks and engaging with the world, they were forced to distance themselves. As a result, the “pandemic produced a social famine, and its after-effects persist,” Eric Klinenberg, a professor at New York University, writes in the Time article.

- Many students continue to struggle with depression, anxiety and other mental health issues, with 50% to 65% saying they frequently or always feel stressed, anxious or overwhelmed, according to a recent study.

A reassertion of independence

Students have also reasserted their independence as instructors have revised attendance policies and stipulated the importance of participation. A Fall 2022 opinion piece in the University Daily Kansan expressed a common sentiment.

“If professors make every class useful and engaging, then students who value their academic and future success will show up and be present in the learning,” Natalie Terranova, a journalism student, wrote in the Kansan. “Professors have a responsibility to the students to teach, but the students have a responsibility to themselves to prioritize what is most important to them.”

“If professors make every class useful and engaging, then students who value their academic and future success will show up and be present in the learning,” Natalie Terranova, a journalism student, wrote in the Kansan. “Professors have a responsibility to the students to teach, but the students have a responsibility to themselves to prioritize what is most important to them.”

She’s right, of course, and her peers at many other student newspapers have made much the same argument. We all make choices about where to devote our time. If something is useful and important, we make time for it. If it isn’t, we don’t. And though students have long sought to declare their independence during their college years, their experiences during the pandemic seem to have made many of them more comfortable skipping class, seeing that as a right.

At the same time, faculty have come under increasing pressure to help students succeed. If too many students fail or withdraw, the instructor is often blamed. Many instructors, in turn, have made class attendance a component of students’ grades, with good reason. Considerable research suggests that students who attend class get better grades. Class is also part of a structure that improves learning, and a recent study says that students who commit to attending class are more likely to show up.

A high school teacher’s observations

A recent Substack article by a high school teacher offered some observations about student behavior that further illuminate the challenges in attendance. That teacher, Nick Potkalitsky, who is also an education consultant in Ohio, says students are still stressed, lonely, and sometimes bitter about what they missed out on during the pandemic. They have trouble concentrating and require several reminders to focus on a task at hand. With more complex tasks, they need more scaffolding, direction, and oversight than they did before the pandemic.

He offered some additional insights from his interactions with students:

- They struggle to connect in person. Students were dependent on technology “for almost the entirety of their social, academic, and personal lives” during the pandemic, Potkalitsky writes. “Students hunger for connection,” he says, but they struggle to connect in person. If they don’t already belong to an online community, the strong connections among those communities make it difficult for new members to fit in.

- They dislike classrooms, where they often struggle to stay focused. They gain energy from playgrounds, parks, hiking paths, and other outdoor settings that allow them to move.

- They crave immersion and autonomy. They like to immerse themselves in a subject, something he attributes to social media. “When school does not and cannot provide these kinds of stimulation, many students disengage and await the next opportunity to use their handheld devices,” he writes.

- They “are experiencing a crisis in trust in authorities and themselves.” They chafe at the idea of school returning to “normal,” and their wariness has been reinforced by schools’ clumsy response to generative AI. “This generation knows that it needs guidance, but desires the kind of assistance that empowers,” Potkalitsky says

Yes, those are high school students, but they will soon be college freshmen. They also exhibit many of the same behaviors faculty have observed of KU students.

Jenny Darroch, dean of business at Miami University of Ohio, writes in Inside Higher Ed that faculty and administrators need “to recognize that today’s students engage differently — and did so before the pandemic. They expect to be recognized for the knowledge they have and their ability to self-direct as they learn and grow.”

Clearly, student attitudes, expectations, skills, needs, and behaviors are changing. Attendance is perhaps just the most visible place where we see those changes. Many – perhaps most – students care deeply about learning and take class attendance seriously. Many don’t, though, and the challenges of addressing that behavior are unlikely to fade anytime soon.

We have much work to do.

Need help? At CTE, we have provided advice about motivating students, balancing flexibility and structure, and using active learning and group work to make classes more engaging and to make the value of attending class more apparent.

Briefly …

- Online enrollment remains strong. A new analysis of federal data shows that enrollment in online courses remains strong even as enrollment in many in-person courses declines, the Hechinger Report says. That trend certainly holds true at KU, where the number of credit hours generated by online courses rose 17% in Fall 2023 compared with Fall 2022. The Fall 2023 totals are 49% higher than those in Fall 2019, the semester before the pandemic began in the U.S.

- An AI pilot through NSF. The National Science Foundation has begun a pilot of what it calls the National Artificial Intelligence Resource. It describes the project as “a concept for a national infrastructure that connects U.S. researchers to computational, data, software, model and training resources they need to participate in AI research.” NSF is working on the pilot with 10 federal agencies and 25 organizations (mostly technology companies). You can contribute your thoughts through a survey for faculty, researchers, and students. The survey is available until March 8.

Doug Ward is an associate director of the Center for Teaching Excellence and an associate professor of journalism and mass communications at the University of Kansas.

Enrollment trends suggest a changing educational landscape

KU’s big jump in freshman enrollment this academic year ran counter to broader trends in higher education.

Around the country, college enrollment has been trending downward (although there was a slight increase in 2023), many campuses have been closing or consolidating, and a lower birthrate after the 2008-09 recession looms in what has become known as the “enrollment cliff.” That is, with fewer births, there will soon be fewer students graduating from high school and thus fewer potential college applicants.

Even so, KU was one of only 10 flagship universities where overall enrollment declined in the 2010s, according to a Chronicle of Higher Education analysis. In the fall, though, freshman enrollment increased 18%, to 5,259, the largest freshman class ever. If current trends continue, there may be another growth spurt next year. But why?

Flagships as backups

The university has credited the increase to reputation, recruitment strategies, increases in financial aid, and an improved football team. I have no doubt that recruitment strategies and financial aid played a significant role, and reputation always matters. I look for broader (often cultural) trends, though. Jeff Selingo, who writes about admissions, innovation, and the future of higher education, offers some possible explanations in his latest email newsletter. Selingo argues that many students, especially those in the top 5% to 10% of incomes, are going to state flagship universities if they don't get into top-ranked schools. He writes:

What I’m finding in my book research is that some families are increasingly skipping over this next ring of institutions from the very top because they don’t get good offers of merit aid. So, instead, the families chase dollars from a set of institutions deeper in the rankings or the kid heads off to an honors college at a flagship public with a low net price (sometimes zero) and lots of perks, like early access to course registration and sponsored research projects with faculty.

This idea of let’s try for Ivy U., and then if not, State U. has been common in some places like Georgia and Florida for decades ...

He highlights another trend that is certainly affecting KU: out-of-state enrollment growing faster than in-state enrollment.

“Nearly every public flagship enrolled a smaller share of freshmen from within their states in 2022 than they did two decades earlier,” Selingo said, citing a Chronicle analysis of data from the Department of Education.

At KU, the percentage of freshmen from Kansas fell to perhaps its lowest ever (56%) in Fall 2023, according to data from Analytics, Institutional Research, and Effectiveness. That is a decline of 13.1 percentage points from 2002, when in-state students made up 69.1% of the freshman class, according to the Chronicle analysis.

The number of Kansas students enrolled as freshmen at KU actually rose to at least a 10-year high, but the number of out-of-state students rose even more, with the university attracting more students from such states as Missouri, Nebraska, Illinois, Iowa, Colorado, Minnesota, and Oklahoma.

The declining percentage of in-state freshmen at KU is actually less substantial than that at some other state universities. Here are a few examples from nearby states, drawing on data from the Chronicle analysis.

| University | 2002 | 2022 | Change (in %pts.) |

|---|---|---|---|

| Colorado | 54.9 | 53.6 | -1.3 |

| Iowa | 59.5 | 53.8 | -5.7 |

| Nebraska | 82.9 | 73 | -9.9 |

| KU | 69.1 | 57.6 | -11.5 |

| Missouri | 82.6 | 69.9 | -12.7 |

| South Dakota | 67 | 52.8 | -14.2 |

| Illinois | 89.2 | 71.4 | -17.8 |

| Indiana | 65.1 | 52.3 | -17.8 |

| Ohio State | 84.6 | 66.7 | -17.9 |

| Wisconsin | 64.2 | 43.8 | -20.4 |

| Oklahoma | 76.3 | 52.9 | -23.4 |

| Arkansas | 80.5 | 39.3 | -41.2 |

If the trends that Selingo indentified hold, KU could see continued growth among out-of-state students, especially those with family incomes of $160,000 and up. The trends also suggest that attracting those students will require higher levels of financial aid, admission to the honors program, and opportunities to work with individual faculty members. In other words, out-of-state students who are rejected by the Ivy League and similar highly ranked schools expect more perks from KU and other state flagship universities.

The sudden growth has brought in additional money to the university, but Jeff DeWitt, KU's chief financial officer, said in a presentation in November that the university had spent millions of additional dollars on scholarships, instructors, advisors, and housing.

"Record enrollment is not free," DeWitt said.

The trends that are benefiting KU and other state flagship universities have made recruitment more difficult at regional universities, the Chronicle reports. Among Kansas regents universities, for instance, only KU (4.1%) and Wichita State (5.1%) have increased enrollment over the past decade. Three others have had dramatic decreases: Pittsburg State (-25.7%), Emporia State (-25.1%), and K-State (-21.5%). Fort Hays State’s enrollment fell 5.7% during the same period. (I’ve excluded the medical center and K-State’s veterinary medicine program, both of which have increased in enrollment but are still relatively small.)

All of that portends a very different look to higher education in Kansas in the coming years.

Are student-athletes employees?

A case before the National Labor Relations Board could force colleges and universities to designate athletes as employees and to pay them as such, Politico reports.

Depending on your perspective, that could either give student-athletes what they are rightfully owed or lead to the collapse of college sports, Politico says.

One passage from the Politico article offered an interesting interpretation on how colleges and universities have looked at athletes:

Pro-labor advocates argue that schools’ “student-athlete” designation is a legal term of art originally designed to shield institutions from player workers’ compensation claims. It deprives competitors of fair compensation for their talents or influence over the system that governs much of their day-to-day college experience, they note.

The NCAA and colleges and universities say, however, that college sports would not survive in their current form if designations were changed. As a result, Politico said, they may seek intervention from Congress if the NLRB forces them to pay athletes.

A ruling is expected in the early spring.

Doug Ward is an associate director at the Center for Teaching Excellence and an associate professor of journalism and mass communications.

It’s a new semester. Do you know where the polar bear is?

We don’t know the last time the first day of classes was canceled.

We’re guessing it was January 1892, when the temperature fell to minus 23, the bottoms of thermometers shattered, and students started using the phrase “froze my bottom off” (or something approximating that).

Of course, everyone was hardier back then, having to walk five miles to campus barefoot through the snow and fend off wolves with their bare, frostbitten hands and all. At least that’s what our elders told us. So everyone may have just shrugged off the lethally cold temperatures in 1892 and showed up for class as usual.

Of course, everyone was hardier back then, having to walk five miles to campus barefoot through the snow and fend off wolves with their bare, frostbitten hands and all. At least that’s what our elders told us. So everyone may have just shrugged off the lethally cold temperatures in 1892 and showed up for class as usual.

Unfortunately, Pupil magazine didn’t exist then, so we may never know. Thankfully, it does exist today, and we have a new issue available! (No applause necessary. Our frozen fingers and toes and ears and hair follicles are tender, too.) After hunkering down in a perpetual shiver for five straight days, you no doubt need a laugh.

Please don’t laugh too hard, though. The polar bear may hear you.

Polar bear?

Shh. Have a great start to the semester!

Doug Ward is associate director of the Center for Teaching Excellence and an associate professor of journalism and mass communications.