40 days (and 40 nights?) of teaching in confinement: A diary

40 days (and 40 nights?) of teaching in confinement: A diary

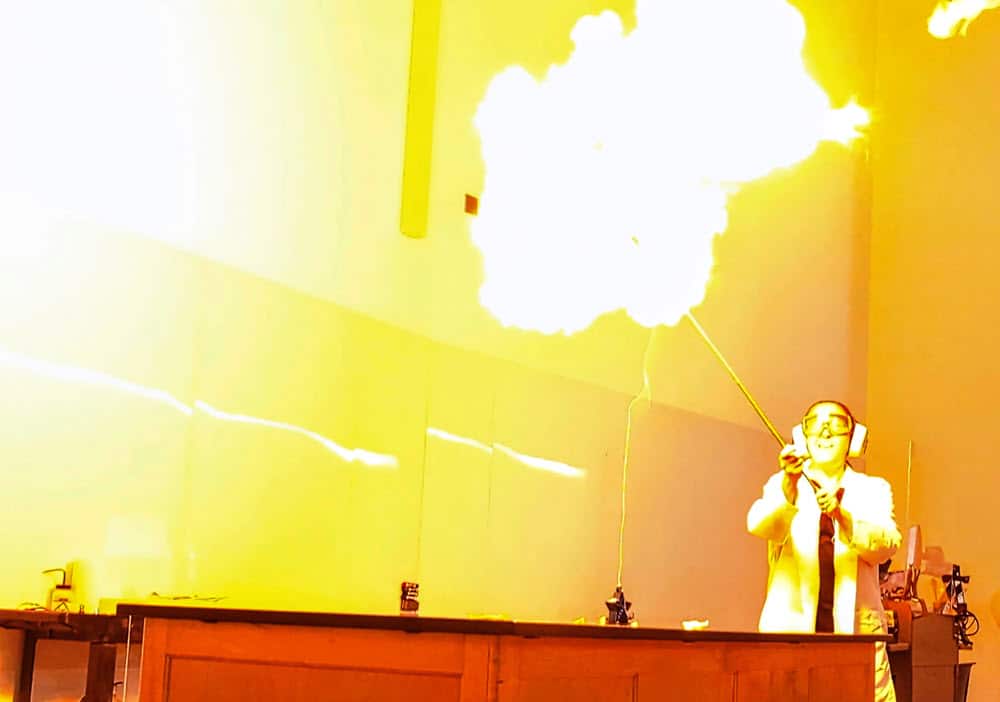

The shift to remote teaching this semester quickly became a form of torture by isolation inflicted upon us by microscopic organisms. There has to be a bright spot somewhere, though. Right?